“What did it take to be an early generative AI adopter?” Is a question I’ve been asked many times.

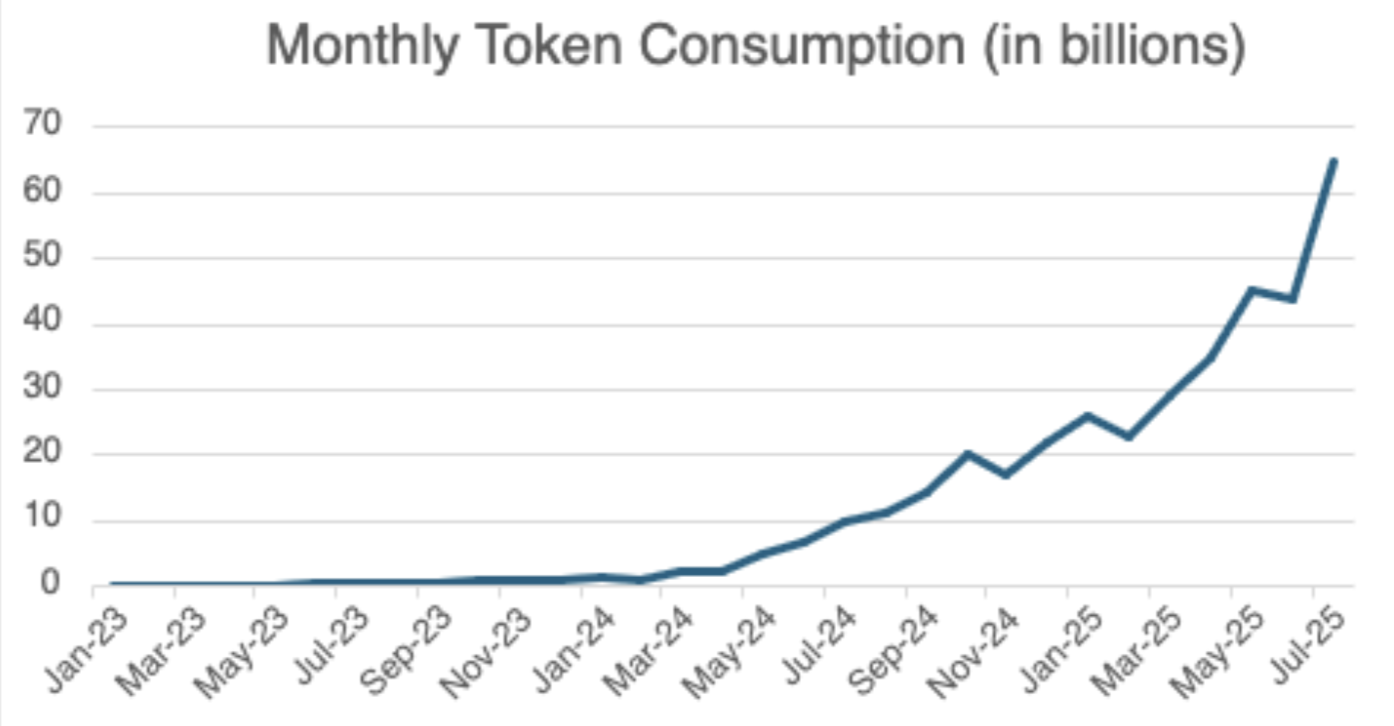

Since concrete adoption journey descriptions are relatively rare, here are the broad strokes of how JustAnswer came to use 2 billion tokens/day across 500 use cases and two dozen LLMs. Hopefully, it’ll help inspire organizations accelerate their own journey.

First, JustAnswer had a strong impetus. As a business that connects people to experts to answer critical life questions, the advent of ChatGPT in Nov’22 was quite the catalyst. We chose to embrace LLMs and to prioritize defining the future of AI+Human professional services. Your motivations may vary.

Second, we had the ability to deploy two engineering teams savvy in “classical” ML to pave the way for the rest of us (since dialed up to four teams today). They built an amazing internal service that encapsulated the complexities of LLM usage governance, freeing the rest of us to dream of big, bold use cases. Vlad Mysla, Yuriy Muzychuk, Volodymyr Hovda, and the gang, thank you for your leadership! Nowadays, there are amazing open-source tools and many vendors offering that service. I recommend you use their wares!

Third, our tools evolved with our skillset and usage scale. For prompt development, we started with simple spreadsheet-based solutions due to their simplicity and zero startup cost but eventually built an in-house Prompt Studio once our needs became clear (no COTS alternatives existed at the time).

In fact, our toolkit is still evolving. For example, we are currently studying how to create more sophisticated evals while democratizing the practice to more employees. For that, we’ve been evaluating vendors, experimenting with open-source libraries, and building prototypes. Like in sports and hobbies, be ready to upgrade the gear to fit the player’s level.

Fourth, we leveraged our culture of innovation to experiment and learn. See my previous posts on our hackathons, for example. We have done upwards of 600 hackathon projects and north of 1,000 production-based AI experiments so far and counting. In fact, the rate is accelerating. Making space for experimentation is a great starting point.

Fifth, we managed this AI embrace like a corporate transformation. We updated our goals, created education, ran workshops, reprioritized backlogs, procured resources, built relationships with foundational players, defined usage policies, setup dashboards, developed more customized tools, and shared wins and learnings often.

Some insights we gleaned along the way

- Despite our strong impetus, progress was slow at first. People were hesitant to move away from known tools and risk their business goals. It took three production-based experiments to achieve sufficiently large-scale results to trigger broad grassroot interest. The persistence of our early champions was key.

- We built many tools in-house because we were early and had a deep tech talent bench. Today, I’d leverage the ecosystem. In fact, we’ve started to rewrite some of our early building blocks.

- We have a strong power law in our usage: our top 10% use cases account for 90% of tokens and cost.

- Our token usage has grown in distinct function-steps corresponding to the rollout of big platform applications, features, and widely used internal tools. In fact, tools and R&D activities account for nearly 20% of our token usage, the rest going to support production.

- We have an 18:1 input-to-output token ratio. I suspect this is industry-dependent. I’d love to see yours.

- The flow of news and the rate of change are impossible to keep up with. I’ve tried. So we aren’t always on the latest and greatest because, sometimes, the opportunity cost is just too high. So, here too, we occasionally leverage tech debt.

- For all our wins and learnings to date, we know we’re only scratching the surface of the possibilities because our brainstorms are still huuuge.

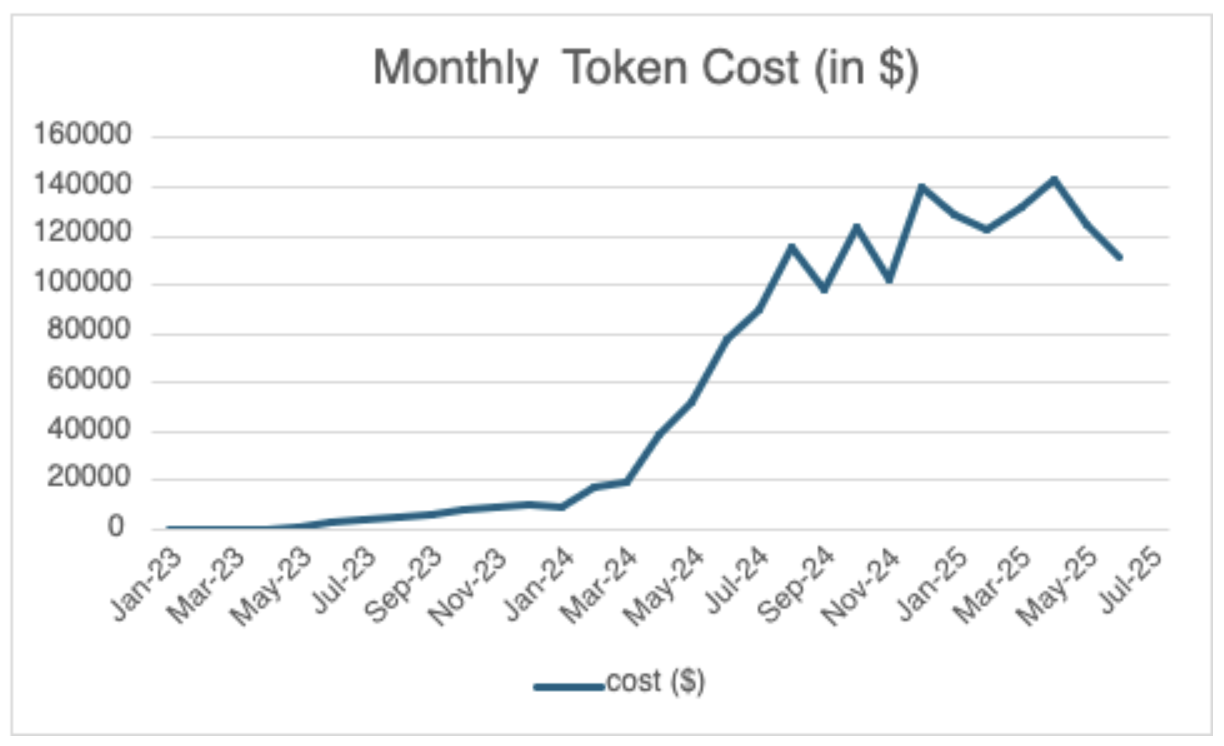

- Since mid-2024, token deflation and model replacement have helped us keep costs relatively flat despite our token consumption growing 7 fold. I’m as shocked as you are.

Since I have no hope of completely covering the work of 1,000 people over nearly three years, I’ll draw a line here and invite your questions and comments on anything of interest. Finally, and most importantly, it’s been one of the most fun things we’ve had a chance to do! In case you needed one more reason to jump in.