Originally published on the Pearl Tech Blog.

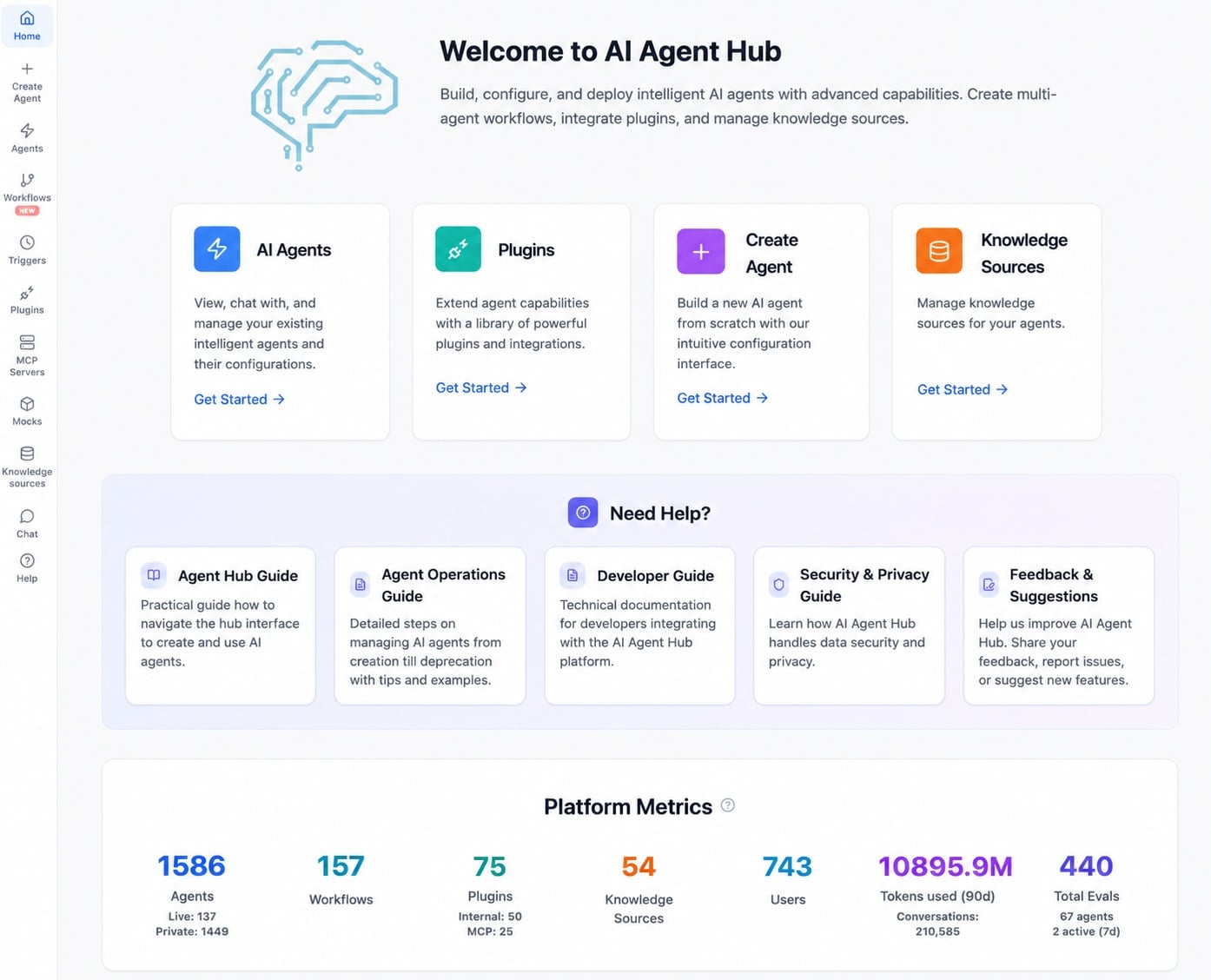

We now have 1,500 AI agents. Seven months ago, we had just 75. This 20x increase is all sunshine and rainbows, right? Not quite. While building agents got lots easier. Owning and operating them is still harder than we’d like. Here’s a candid look into what it means in practice, what’s going well vs not, and what we’re going to do about it.

Agents Everywhere All at Once

In early 2025, building agents became reasonably possible, so we evaluated the budding commercial options but decided to build our own MVP agentic platform. Our goal was to automate workflows for our 750 employees, since we had already made so much progress adding AI features in our professional services marketplace, where we invested more and earlier. More on that another time.

Today, agents are so trendy that buying a GPU or Mac mini is lol. That includes us, thanks to loads of hands-on workshops, new platform capabilities, and much celebration of early wins, especially during our famous hackathons.

Six Popular Agents

Demo Cat* assistant

Pearl’s coolest ritual may be the Friday company-wide demo of recent learnings. But its orchestration across Ukraine, India, and the USA led to frustration for presenters and organizers. Battles over time slots, time zone confusion, duplicate entries, wiki synopsis lacking key details, demos that missed key learning elements (big picture, hypothesis, experiment, results, impact, next steps), preso archives with inaccessible links, etc. As we grew the company and accelerated our learning rate via value stream improvements, the friction grew high enough that some teams hesitated to go through the hassle of sharing their learnings. Now there’s an agent for that and a bit less insanity in that corner of our world.

- We have a strong feline theme at Pearl! Our projects are either cheetahs (nimble and fast) or tigers (big and strong), for example.

People Ops Agent

Need to find someone with specific expertise, consult an internal policy, see a department’s past and present goals and OKRs, or figure out who on a given team is not out on PTO today? Our people ops agent cross-references the wiki, the HRIS, and Lattice, with proper role-based access control (RBAC). Very popular.

Production Incident Assistant

We believe that production incidents should be learned from. So, we have always catalogued the events (who, what, when, where, how) and do post-incident retrospectives. This assistant takes the tedium out of it by scouring all the right channels to package the information and publicize the dossier.

Work Radar

You’re coming back from a week of PTO and wondering what you missed? This agent’s for you. It looks at what happened in your usual messaging channels, email, wiki pages, document folders, and meetings recordings/notes, and compiles a persona-appropriate summary of updates, action items, upcoming milestones, and useful references.

Agentic Workflow Copilot

Got a complex, multi-agent workflow and find the drag-and-drop interface intimidating? The Workflow Copilot holds your hand and steers you clear of the most typical pitfall. In 15 seconds (P50 latency), where browser tool steps are by far the slowest, and for less than a buck of tokens, you are in business.

Agent Creator Copilot

And, of course, we have the vibe coding way of creating AI Agents. It’s self-referential in the most delightful way and a contributor to our 20x factor. It’s been invoked 400 times this past week. It uses approximately 160K tokens per invocation with a 99:1 input-to-output ratio.

Agent Types

Along the way, three kinds of agents have emerged.

Personal experiments that remain private to their creator. They represent 80% of all agents on our platform. Frequently, we see an uptick following one of our workshops that are open to all and sometimes attended by 15% of the entire company. In these wild west days, people need a private place to learn the ropes. Our usage stats show they are used briefly, then mostly ignored.

Team-specific agents are custom-built for a very narrow and specialized workflow that usually pertains to a single team. Their access is group-private because there’s little upside to exposing such single-purpose tools to others. Only a few dozen agents fit this category, but some are among our most used ones.

Shared, high-usage agents comprise about 10% of our stable. Not only do they handle useful work, but they also serve as public examples that inspire others. I wish we had more of those.

Why Still Build In-House?

We have been building our agentic platform atop Microsoft’s Agent Framework, even though commercial platforms and open-source software have come a long way since we launched in early 2025. We suspect the day might come when we may want to stop building and use someone else’s software, but we’re not quite there yet. In fact, we just had a review last week, and we’ll continue to mostly roll our own through one small but mighty team for a bit longer.

Factoring in that decision is the speed at which the agentic stack mutates; It makes us hesitant to commit. Meanwhile, we do our best to build in modular fashion, leveraging emerging standards as interfaces (MCP, A2A, RAG, AG-UI, skills, etc.), delineating modules that could eventually be replaced. And to inform our thinking, we’re creating a Thoughtworks-inspired radar.

Surprise Escalation

We originally built our agentic platform to automate daily gruntwork. We had originally thought of it as quite distinct from weaving generative AI in our platform, where LLMs are used in hundreds of use cases, from ad generation, to funnel hyper-customization, to e-commerce bot, to consumer-expert matching, and countless others.

But to our surprise, some teams experimented using our newfound agentic capabilities in our production environment. Since their answer to the Sean Ellis test is “out of our cold, dead hands”, we’re now adding industrial-grade ‘ilities’, like Observability, Recoverability, and Scalability, much sooner than expected. If an agent fails and it impacts our marketplace, it’s no longer just an experiment gone awry; it’s a standard service outage.

Agent Operations Is a Responsibility

If building AI agents has become a lot easier, their care is a new reality for many of our employees. Until recently, engineers created software with the full expectation that they’d have to maintain it for the long haul.

Now, just about anyone can become a parent to an agent, but it’s a bit messy. People “adopt” agents, but then they have to feed them (data), train them (evals), and clean up after them (ilities). That’s a whole lot of people unaccustomed to carrying a pager.

Software creation might well be trending towards free, but it’s more free as in puppy than beer. We’ve defined what good agentic operations should look like. That was the easy part. Now, we must figure out how to bring this vision to life.

Judging Performance

Testing and evals are still hard. Not the application of evals, but the definition of what “good” is. For single volley situations, it’s usually pretty easy. For multi-turn applications, especially those that include a human in the middle, we struggle more, as do we for more complex and longer-horizon workflows.

We still rely quite a bit on humans to validate agentic performance at build time and to keep an eye on it at run time. That’s problematic because, as we went up the abstraction ladder from prompt engineering to context engineering to harness engineering, AI should now be in charge of creating and testing hypotheses. But that can only happen if it knows which hill to climb and which way is up.

With better evals, we could iterate faster and, therefore, compound our learnings faster. The team already knows it. Our Q1 hackathon in February 2026 had six projects focused on AI-driven self-improvement. Andrej Karpathy’s autoresearch viral post around the same time confirmed this is a valuable direction to explore further, which we will.

Assessing Value

When we started this journey, we were content with measuring vanity metrics: agent, user, and session count, because they are prerequisites to eventual impact. Now, although all who are involved feel that agents are useful, and nobody is advocating going back, we are still discussing how best to measure outcomes and ROI. Toil removal? Employee satisfaction? Time saved? Value of the work automated? Cost of delay of future work brought in?

The Roadmap

Over the next couple of weeks, our agents will become ‘ambient’. They will respond to an increased variety of events like email, messages, workflow completion, timers, alerts, files, and more.

A more sophisticated memory system is in the cards. And let’s not forget about the ilities.

There are also opportunities for better UX. Like most people, we, too, seem to worship at the altar of convenience. In a world where ChatGPT or Claude are but a keystroke away, our own platform needs to be just as seamless to use. I sometimes find myself reaching for old familiar tools for simple use cases out of habit. I expect that design will become a focal point.

One Platform to Rule Them All?

There’s an active discussion about whether we should consolidate some of our early genAI workflows and tools onto this agentic platform. Some of those tools date from early 2023 and inserted basic LLM capabilities into legacy tools. Now, anything new is agentic-first, so we just might consolidate tooling, but leave old workflows alone. But with so much low-hanging fruit to pick, I don’t expect huge migrations any time soon.

Big Ambitions

Soon, we’ll systematize the operation and maintenance of agents in production at scale. And we’d better do so quickly because our employees are creating agents at an accelerating pace.

With this more refined proficiency, I’m looking forward to bringing to life two big ideas:

- Drastically accelerating our end-to-end lead time (aka concept-to-cash) company-wide. As a data-driven organization, we’ve been measuring and optimizing it for a few years. For example, we executed 650 projects in 2025. Some folks hypothesize that we could go 3x faster by optimizing across all functions. That would create incredible value.

- I have my eye on self-improving experiences. I believe it may be possible to have a self-optimizing system where every customer, expert, or employee interaction makes our system smarter, not in an async, batched refinement process, but in real-time. Ambitious? Maybe. But if not that, what are we even doing?

We’ll let you know how that pans out soon.

Imua*

By JP Beaudry, Pearl CTO

- move forward with purpose